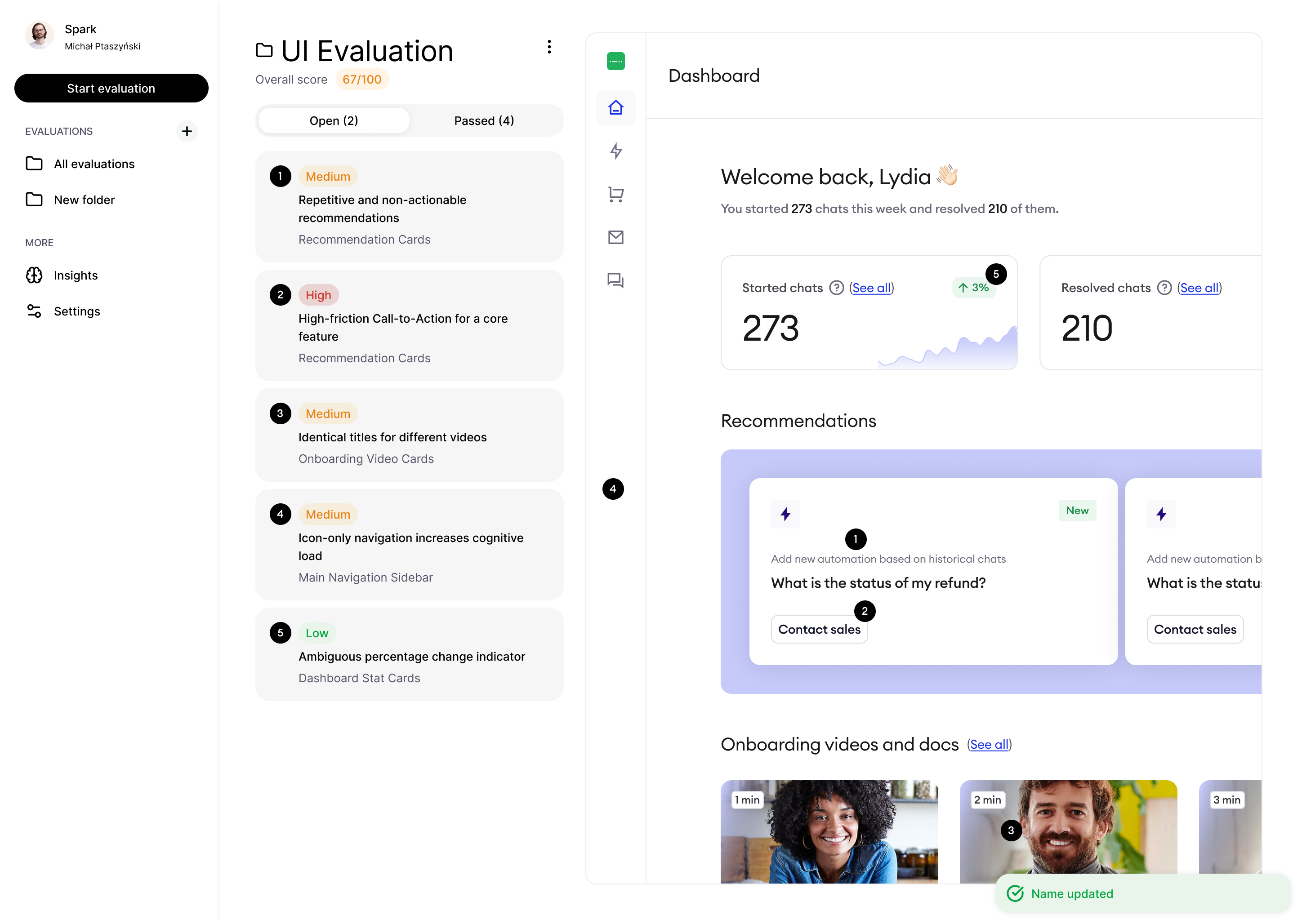

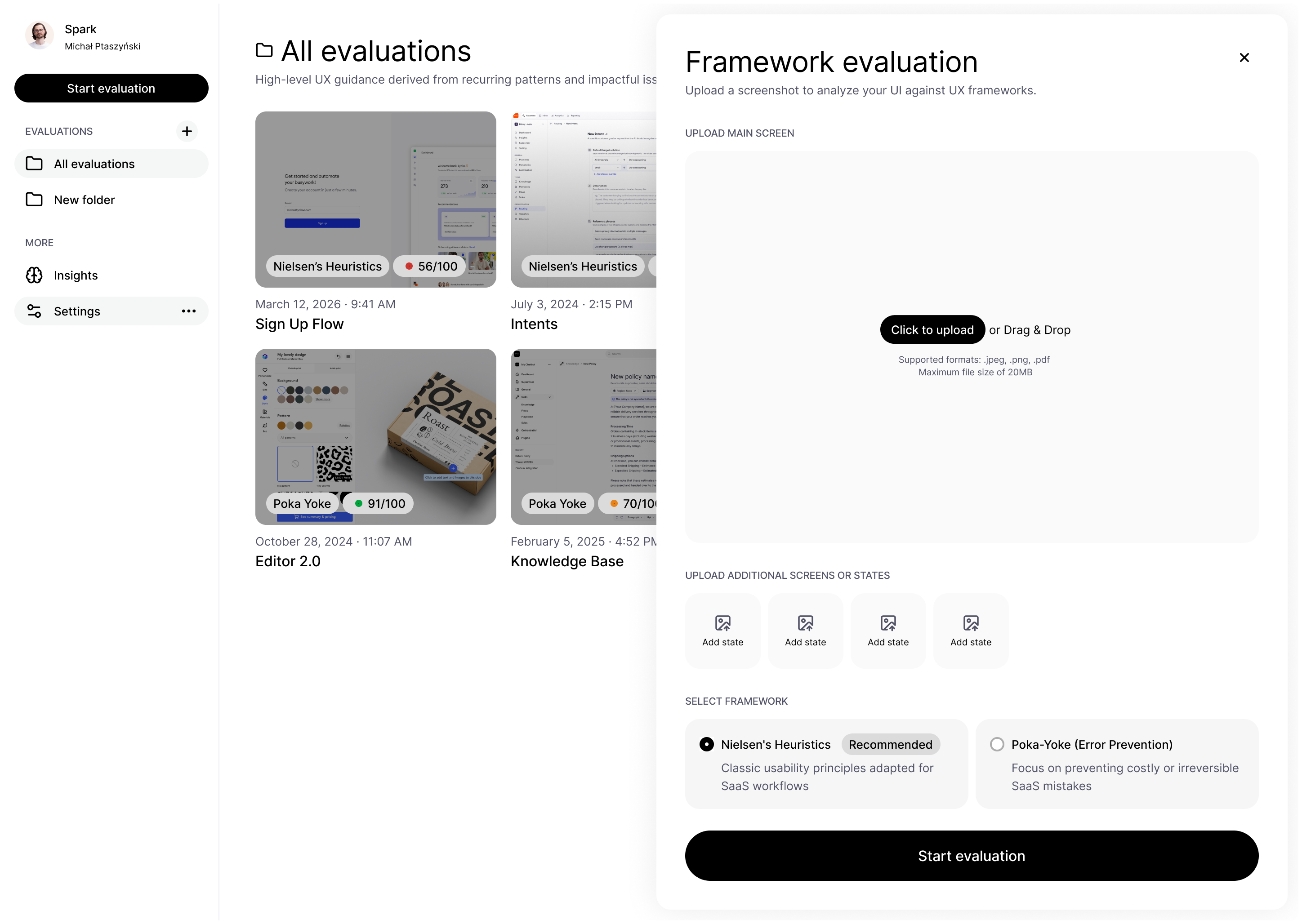

Spark — AI-powered UX evaluation tool

A private side project exploring AI-assisted heuristic analysis. Spark automated the evaluation of UI screens against design frameworks, surfaced recurring patterns across projects, and helped me understand the real limits of building AI-first products.

I designed and built Spark — a private AI-powered tool for automated UX evaluation, combining heuristic analysis frameworks with cross-project pattern recognition.

Problem

While designing interfaces, I regularly used AI models for heuristic evaluations — Nielsen's heuristics, poka-yoke checks, consistency reviews. The workflow functioned, but it was scattered and lacked historical context. Every analysis was one-off and difficult to compare over time. There was also no way to identify recurring design problems appearing across multiple projects.

Solution

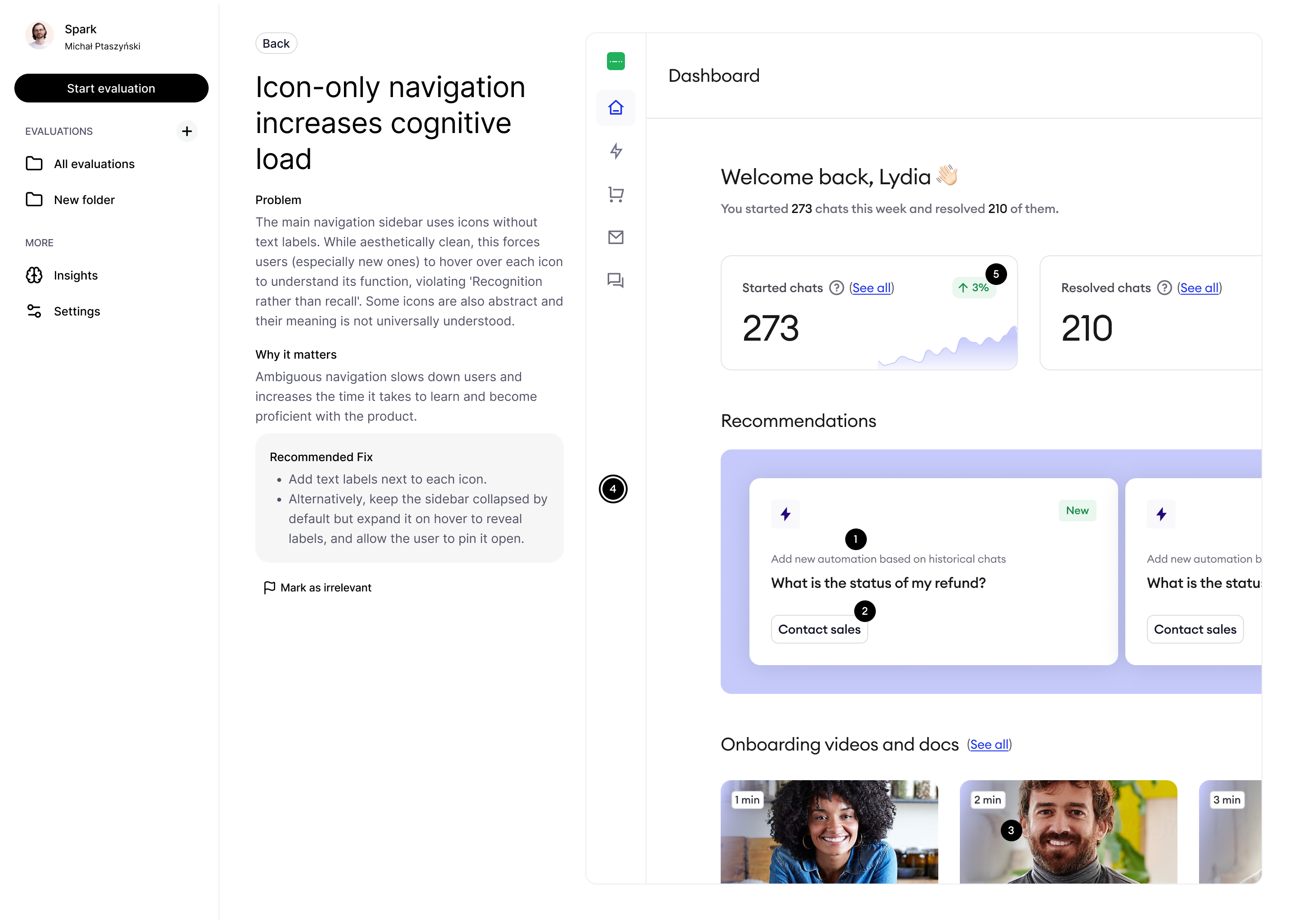

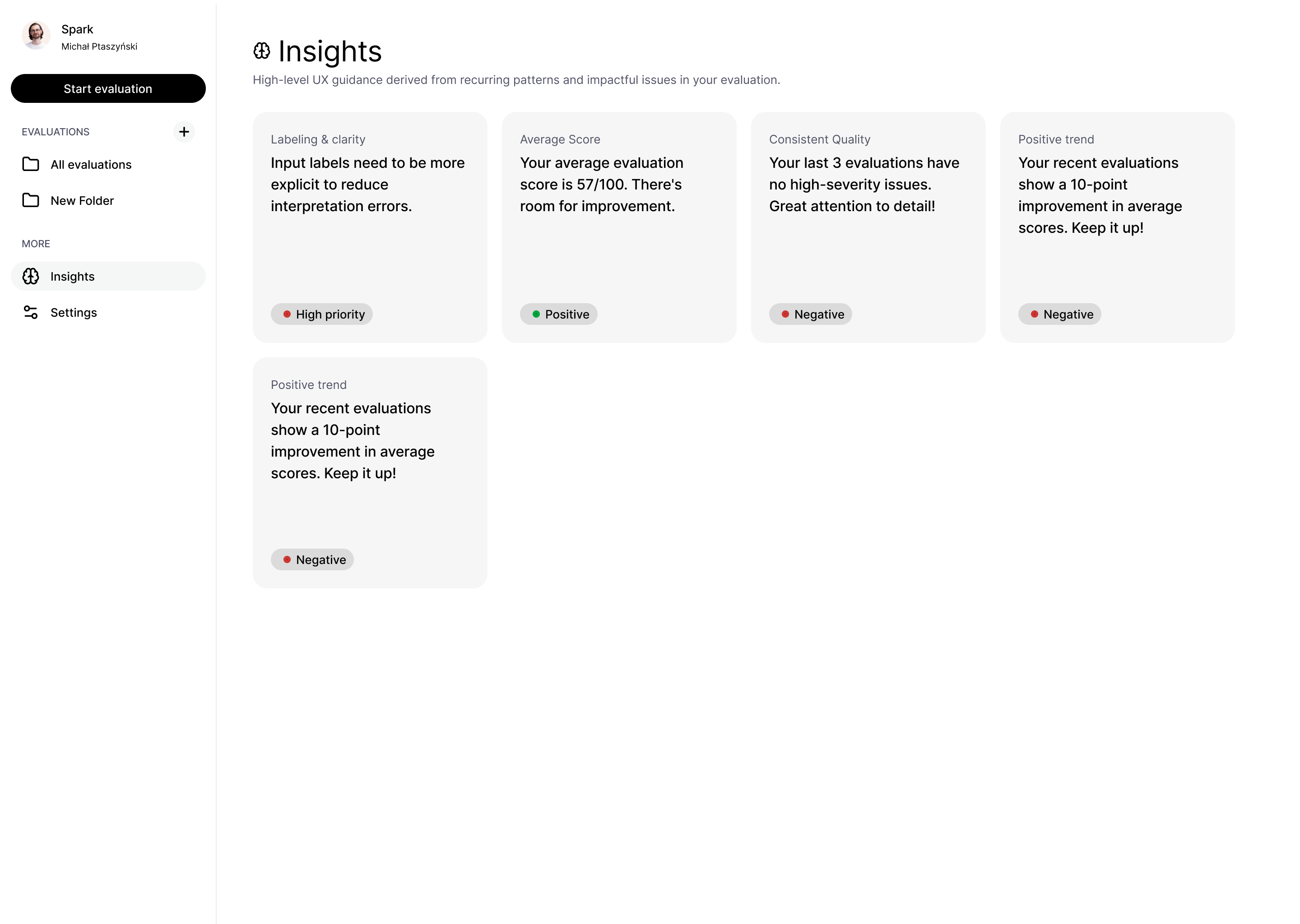

Spark automated UX screen analysis using AI models. Users could upload screens, run heuristic analyses based on different frameworks, and receive feedback covering both problems and well-designed elements. An insights layer tracked the most frequently recurring issues over time. The system analyzed multiple screens simultaneously, giving the model broader context of the entire flow rather than a single isolated view.

Results

The biggest challenge was LLM non-determinism — the same analysis could return different results for identical screens, affecting scoring consistency and trust in the feedback. This pushed me to think carefully about model guidelines, noise reduction, and trust-building mechanisms in AI-driven products. Balancing transparency with usability was equally tricky. Rather than capping detected issues, I let users dismiss suggestions they found irrelevant — keeping the system honest without overwhelming them.